From "AI Slop" to the digital canvas: Why teaching AI to paint is on the path to AGI ...

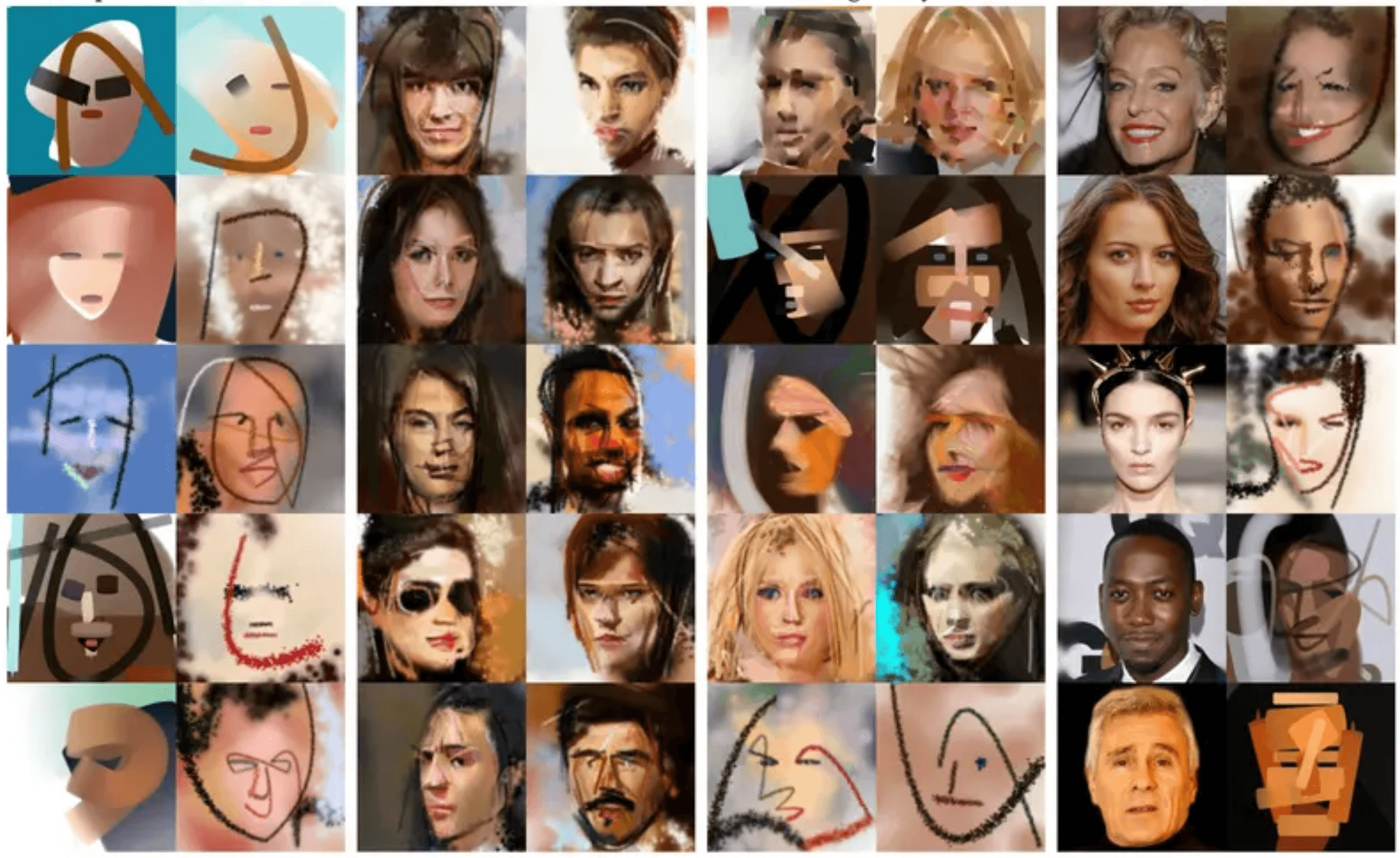

Cover image produced by SPIRAL

If you have spent any time online recently, you have seen it. The internet is rapidly filling up with what critics call "AI slop", hyper-detailed, glossy, instantly generated images of six-fingered hands, anatomically impossible physics, and soulless corporate artwork.

Tools like Midjourney and DALL-E are technological marvels, but their output often feels hollow. Why? Because they aren't painting. They are printing. They use diffusion to mathematically vibrate millions of pixels into a finished grid all at once. There is no process. There is no physical struggle. There is no time.

But far away from the commercial image generators, a different kind of AI research is happening. Scientists are building AI that doesn't just output a picture, but actually learns the physical act of painting from first principles.

This shift from instantly generating "slop" to painstakingly learning to wield a brush, isn't just a fun art experiment. It is a critical stepping stone on the path to Artificial General Intelligence (AGI).

Here is why the antidote to AI slop is also the future of embodied AI.

Phase 1: The Death of the Pixel (Learning from Scratch)

To cure the "slop" problem, researchers had to strip the AI of its god-like ability to manipulate every pixel instantly. They put it in a sandbox called Stroke-Based Rendering (SBR).

The AI is given a blank digital canvas, a virtual brush, and a limited palette. At first, it knows nothing. It scribbles randomly. But using Reinforcement Learning, it is rewarded every time a stroke makes the canvas look slightly more like a target photograph. Over thousands of attempts, the AI learns sequence and consequence. It realizes that if it paints the delicate branches of a tree first, and then slaps a blue sky over the top, the branches disappear. It has to learn to work in layers.

The Art: Look at the benchmark Learning to Paint project (Huang et al.). If you watch the time-lapse of this AI recreating a photograph of a human face, it is eerie. It doesn't print top-to-bottom. It "blocks in" a blurry, flesh-toned underpainting using massive, semi-transparent brush strokes. Then, it autonomously switches to a fine-tip brush, laying down sharp, dark strokes to define the pupils, nostrils, and hair. It behaves exactly like a human art student managing a physical medium.

See it in action: In the Learning to Paint Project by Huang et al., you can watch the actual time-lapse videos of a Reinforcement Learning agent "blocking in" an underpainting before refining the details with smaller strokes, exactly like a human art student.

Phase 2: Constraints Breed True Creativity

AI slop happens because the AI has infinite choices and defaults to the mathematical average of everything it has ever seen. But what happens when you constrain an AI?

When DeepMind built an adversarial drawing agent called SPIRAL, they eventually stopped giving it target photos to copy. Instead, they just asked a mathematical critic: "Does this look like a face?" Because the AI was limited to a simulated, pressure-sensitive pen and only a few dozen strokes, it couldn't be photorealistic.

The Art: SPIRAL had to problem-solve. It developed an unsupervised, abstract style. It realized it could trick the critic into seeing a face by drawing just two scratchy dots and a single, sweeping, jagged line for a mouth. The resulting art looks like a minimalist, nervous charcoal sketch. Without being prompted, the AI invented abstraction because it was the most efficient way to solve a physical problem with limited resources.

Spiral in action, learning to paint …

Phase 3: Adding the "Inner Voice" (2024–2025)

The next massive leap was combining this robotic hand with a Large Language Model (LLM) "brain." Instead of just matching a photo, the LLM acts as the creative director. We moved from an AI that just guesses the next pixel, to an AI that formulates an intent, plots coordinates, and executes a vision stroke-by-stroke.

The Art: MIT’s SketchAgent (published in 2024/2025) is a masterclass in this. It uses a multimodal LLM to draw concepts using an invisible grid and Bézier curves. If you ask it to draw the "Taj Mahal," it doesn't generate a pixel image. It outputs a sequence of mathematical strokes. The system is so sequential that researchers built a collaborative mode: the AI draws a stroke, then you draw a stroke, and the AI adapts its next move based on your addition. It is no longer a tool; it is a collaborative artistic partner.

The Modern Frontier: SketchAgent shows how LLMs now use "string-based actions" to draw stroke-by-stroke, turning language-based reasoning into visual planning. Youtube clip

Phase 4: Progressive Illusions and Temporal Reasoning (2026)

As we enter 2026, the intersection of LLMs and SBR has achieved something that raw image generators simply cannot do: Temporal Spatial Reasoning.

A groundbreaking early 2026 paper introduced Progressive Semantic Illusions (the "Stroke of Surprise" framework). The challenge was to force an AI to draw one object, but plan its strokes so perfectly that adding just a few more lines transforms the image into something entirely different.

The Art: The AI is prompted to draw a "pig." It sketches a very clear, coherent vector drawing of a pig's face. But the AI's "inner brain" is holding a dual-constraint. When you prompt it to keep drawing, it adds five specific delta strokes. Instantly, the structural foundation of the pig is re-contextualised. The pig's floppy ears become the wings of an "angel," and the snout becomes a halo.

Standard AI (like Gemini or DALL-E) cannot do this because it relies on destructive editing, overwriting pixels. This 2026 AI had to understand the future physical state of its canvas. It laid down "prefix strokes" that were semantically valid for Object A, but primed for Object B.

The Leap to AGI

Why does a drawing of a pig turning into an angel matter for Artificial General Intelligence? Because AGI cannot be achieved purely in a text box.

If we want to build a general intelligence that can operate in the real world, whether that is driving a car, performing surgery, or maintaining industrial infrastructure, it must understand embodiment. The physical world is entirely built on constraints, resource management, and cause-and-effect.

In 2026, robotics companies are deploying AI to paint yacht hulls and inspect confined hazardous environments. The software running those robots isn't based on text-prediction; it is based on the exact same first-principles logic as our digital SBR painters. A robot painting a ship needs to perceive the surface, manage its limited paint supply, plan the coverage sequentially, and execute physical movements that cannot be instantly "undone."

Current pixel generators are a dead end for AGI because they are completely detached from physical reality. But an AI that learns to paint from first principles is learning the foundational rules of the universe. It is learning that physical actions take time, that mistakes have structural consequences, and that complex goals require sequential planning.

The next time you see a piece of generic "AI slop," remember that it is just a party trick. The real future of AI is quietly sitting in a digital studio, learning how to mix primary colours and plot Bézier curves, one deliberate stroke at a time.